Imagine downloading a five-year text history with your closest friends. You are holding a 15MB file filled with inside jokes, midnight arguments, and thousands of fragmented timestamps. You paste the first chunk into a generic conversational interface, hoping for a fun summary. Instead, the system crashes, loses track of who is speaking, or invents conversations that never happened. Currently, the transition from generic language models to specialized measurement architecture is solving this exact problem. Rather than relying on a standard AI chatbot, users are shifting toward purpose-built recap apps that process massive text exports securely and extract structured narratives without losing context.

My daily work as a backend developer involves structuring cloud-based communication services and API integrations. I deal with raw, unstructured data constantly. People assume that feeding a raw chat log into a standard language model is a simple task. It is not. Chat histories are messy, non-linear, and dense. To get actual value from your messaging history, you need a methodical approach to data processing.

Step 1: Why are our messaging habits demanding better technical infrastructure?

Before attempting to parse your own data, it helps to understand the scale of the problem. We are generating more conversational data than ever before. According to recent mobile industry data, global app sessions and consumer spending continue to reach record highs, emphasizing the depth of mobile engagement. As our digital interactions deepen, the sheer volume of text data sitting on our devices has grown exponentially.

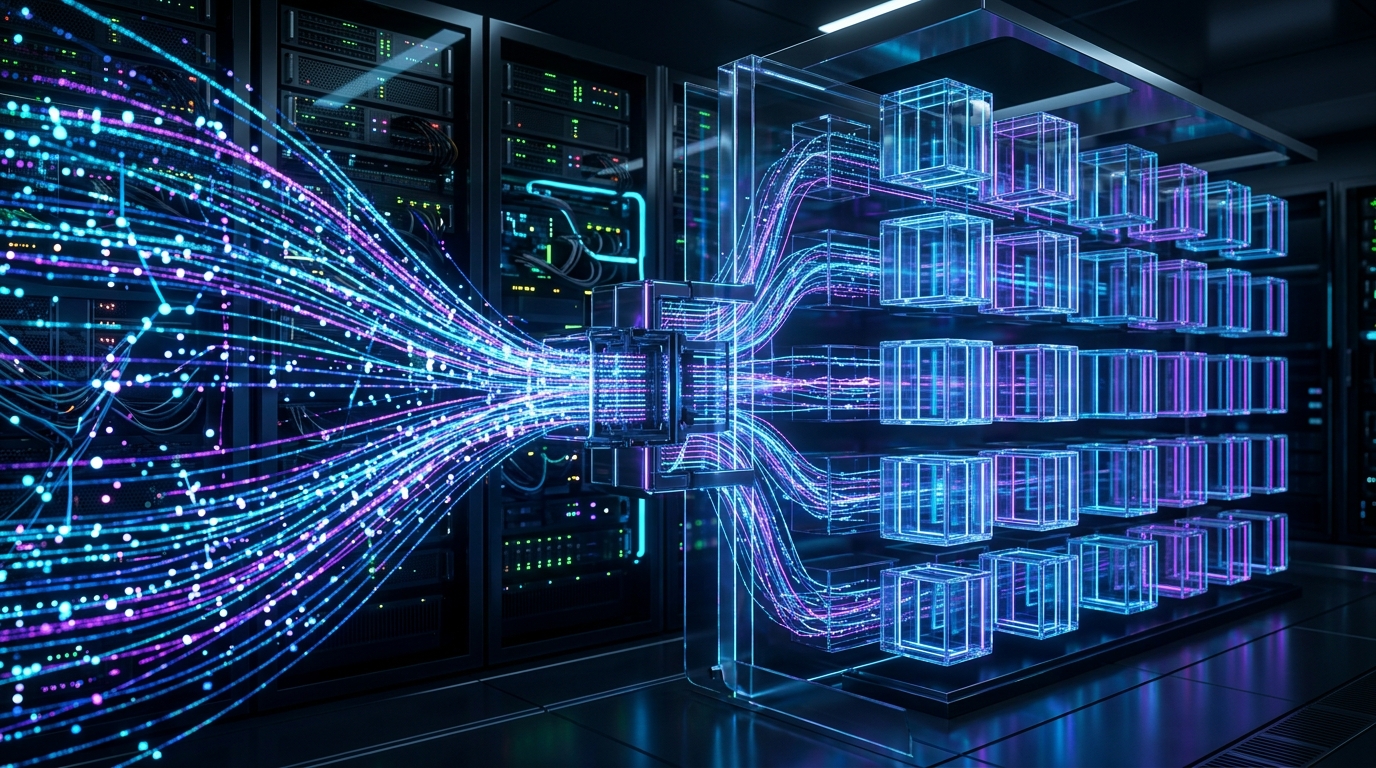

Recent trends highlight a pivotal shift toward "AI + Measurement Architecture." This indicates a fundamental change in how we handle data. Artificial intelligence is no longer just a standalone tool you chat with; it is becoming the foundational infrastructure used for complex data segmentation and end-to-end processing. If you want to analyze your communication patterns, you need tools built on this kind of dedicated architecture, not just a blank text prompt.

Tip for this stage: Define your actual goal. This approach is specifically for individuals, friends, and small teams who want to turn their personal chat exports into entertaining, structured summaries. It is NOT for enterprise CRM data analysts looking to build automated customer support pipelines.

Step 2: Why do general platforms fail at context retention?

I frequently review the routing queries hitting backend analysis systems. People searching for solutions often type variants like cha t gpt, chat gp t, or even wchat gpt into app stores. Whether a user is searching for chàt gpt, gbt char, or testing out interfaces like deepseek and grok ai, the foundational issue remains the same: token limits.

Every AI chat system processes text in "tokens." When you paste a large WhatsApp messenger log into a generic GPT chat, the model reads the timestamps, the names, and the system messages (like "Image omitted") as tokens. It quickly reaches its memory capacity. By the time it reads January's messages, it has already forgotten what happened in November. The narrative collapses.

Furthermore, general-purpose models are trained to answer questions, not to act as data analysts for raw JSON or TXT files. When they encounter slang or heavy context switching—typical in group chats—they hallucinate. Specialized infrastructure is required to filter out the noise before the model even begins its analysis.

Step 3: How do you identify the right privacy and processing framework?

Exporting personal messaging data requires strict privacy considerations. If you are uploading a year's worth of personal interactions, you must evaluate the tool's data handling policies.

When selecting an application for this task, use these criteria:

- Data Ephemerality: Does the application store your messages permanently, or does it discard the raw text immediately after the analysis is complete?

- Platform Specificity: Can it natively read the specific format exported by your messaging app, recognizing where one message ends and a reply begins?

- Output Format: Does it provide a flat wall of text, or a visually engaging summary (like a "Wrapped" style output)?

Generic tools often use your inputs to train future models. A dedicated tool should isolate your session data. The rising focus on data consent is clear in the broader market; reports on App Tracking Transparency (ATT) show that users are becoming significantly more deliberate about where their data goes. Your choice of analysis tools should reflect that caution.

Step 4: What is the benefit of deep context segmentation?

From an engineering perspective, the solution to processing massive text files is deep context segmentation. Instead of forcing an entire file into a single prompt, a well-architected system breaks the document into logical blocks based on time gaps or topic shifts.

While generic AI summaries often strip the nuance from personal messaging, segmentation algorithms map the relationships between participants first. They identify who the primary talkers are, which phrases are used most frequently, and when peak activity occurs. Only after this metadata is structured does the system pass the organized blocks to the artificial intelligence chat backend for narrative generation.

This is why searching for chats gpt or chatgtp usually leads to frustration. The standard web interface simply lacks this preprocessing layer. It treats your valuable history as a single, overwhelming string of characters.

Step 5: How do you select the correct tool for narrative summaries?

If you want a detailed, entertaining breakdown of your conversations without manual prompt engineering, you need an application built specifically for that workflow. Wrapped AI Chat Analysis Recap is designed for that exact purpose. It takes the exported text file, applies the necessary context segmentation, and generates a structured recap that highlights inside jokes, participant behavior, and conversational trends.

Working closely with infrastructure teams across Dynapps LTD, I have observed that users prefer visual, story-like outputs over raw statistical tables. You do not just want to know that you sent 4,000 messages; you want to know what those messages say about your group's dynamic. A dedicated recap tool handles the computational complexity, formatting the output into shareable, digestible insights.

Practical Q&A: What else should you consider before uploading?

To finalize this process, I compiled answers to the most frequent technical questions I receive regarding chat exports:

Does the file size matter?

Yes. If your text file is over 20MB, it usually contains years of media attachments (even if just labeled as omitted text) or heavy system logs. Specialized tools chunk this data automatically, whereas a standard Gemini or ChatGPT interface will often reject the upload or truncate the file.

Why does my summary look generic in standard chatbots?

Because general AI models default to a neutral, informative tone. A specialized app applies pre-configured, personality-driven prompts to the segmented data, resulting in an engaging, culturally aware summary that actually sounds like human friends interacting.

Will changing my device affect the export?

Usually, no. As long as the messaging app generates a standard TXT or ZIP export of your text logs, a properly built parsing engine will read the timestamps and text strings accurately, regardless of the operating system.

Processing your communication history should not require a degree in data science. By understanding the limitations of general tools and utilizing purpose-built infrastructure, you can turn years of chaotic messaging into clear, entertaining insights.